Hello,

I am currently working in CADET Process to perform some parameter estimation using a NoBinding model and some experimental data. My goal is to estimate column bed porosity ad axial dispersion coefficient.

I have been following the CADET Process guidance documents and making changes for my applications as needed, however, when I get to the point of solving the optimization problem, I am running into an error that I cannot decipher. I have attached a copy of my script and the dataset I’m working with.

I am quite new to Python/Jupyter so please bear with me.

Best,

Yemi

75cmph_1.csv (13.8 KB)

process_test_MSSpcc.ipynb (55.7 KB)

Hi Yemi,

I assume the problem is an outdated version of CADET-Process. I’m currently working on a new release. Please give me some days and I will let you know when it’s done. I’m also happy to assist in a call if there are more problems.

Best

Jo

Thank you Jo.

I will await the update.

Hey Yemi,

I have not forgotten you but I’m still working on finalizing some of the new features. If you already want to test the new version, please checkout the dev branch off of Github. It already includes a lot of the improvements. To install it, run the following command from repo’s root directory. I’d advise you to use a separate Python/conda environment to avoid dependencies issues.

pip install .

Please also make sure that some of the dependencies are upgraded:

pip install hopsy --upgrade # Should be 1.0.0

pip install polyround --upgrade # Should be 0.2.0

I’m happy to assist if you need help. And in any way, the new release will not take much longer (I hope).

Thank you for the update Jo.

I will look into getting the update as you’ve outlined. I’m not quite sure yet about creating Environments and I don’t want to mess anything up.

I do have other projects and things I can work on in the interim so I can wait for the release as well.

All the best.

-Yemi

Thanks for your understanding.

I feel your concern, I was in a similar situation not so long ago. But let me tell you, the fear of messing things up is the reason why environments exist in the first place. It’s well worth investing a couple of minutes to learn about them. It’s really not difficult and it removes a critical point of failure. For some introduction, see here. Again, I’m happy to assist if there are questions/problems.

Hi Jo,

So I was able to create a clone of my base environment and installed the dev branch repo without any errors. I also ran the commands to update hopsy and polyround.

I do need some help in understanding how to run this updated CADET Process build. Since I cloned my base environment that already had CADET Process installed on it, which if I am understanding correctly now means I have two versions of CADET Process installed in this new environment, how do I run a notebook with the dev branch install?

I am assuming , for example, that I am no longer running “from CADETProcess.processModel import ComponentSystem”

Hi,

no, it’s not necessary to change the code in any way. You only need to “activate” the corresponding environment. How this is done, depends a bit on your personal setup.

Usually, this is done using the command line. For example, if you have an environment called test, it would look something like this:

First, let’s check the version of Python being used by default (if you use Windows, use where instead of which).

jo@matebook:~$ which python3

>>> /usr/bin/python3

Now, we activate the environment:

jo@matebook:~$ conda activate test

(test) jo@matebook:~$

Note that after activation the name of the environment is shown in front of my user name. Also, when asking which python is used, the path of the python version changes:

(test) jo@matebook:~$ which python3

>>> /home/jo/software/miniconda3/envs/test/bin/python3

This also adds/removes all the different packages you installed in the corresponding environments to and from the “path” (This is what controls which programs the computer finds when running a command).

Then you can for example start your IDE from the command line. I’m sure there are other ways if you use other IDEs such as VS-Code but you’d have to look them up.

Thanks Jo.

So this error came back when I tried to rerun my script as is using the dev-branch install.

---------------------------------------------------------------------------

ModuleNotFoundError Traceback (most recent call last)

Input In [3], in <cell line: 6>()

4 from pathlib import Path

5 from cadet import Cadet

----> 6 from CADETProcess.processModel import ComponentSystem

File ~\Anaconda3\envs\CADETProcessTest\lib\site-packages\CADETProcess\__init__.py:31, in <module>

29 from . import metric

30 from . import performance

---> 31 from . import optimization

32 from . import comparison

33 from . import stationarity

File ~\Anaconda3\envs\CADETProcessTest\lib\site-packages\CADETProcess\optimization\__init__.py:5, in <module>

3 from .optimizer import *

4 from .scipyAdapter import COBYLA, TrustConstr, NelderMead, SLSQP

----> 5 from .pymooAdapter import NSGA2, U_NSGA3

File ~\Anaconda3\envs\CADETProcessTest\lib\site-packages\CADETProcess\optimization\pymooAdapter.py:11, in <module>

9 from pymoo.core.problem import Problem

10 from pymoo.factory import get_reference_directions

---> 11 from pymoo.util.termination.default import MultiObjectiveDefaultTermination

12 from pymoo.core.repair import Repair

14 from CADETProcess.dataStructure import UnsignedInteger, UnsignedFloat

ModuleNotFoundError: No module named 'pymoo.util.termination'

Hey, this suggests to me, that CADET-Process is still not installed correctly. If you look at the dev branch, this line is already updated to pymoos latest API.

To install the dev branch propertly, please navigate to the directory, run

git checkout dev

Then activate your environment

conda activate my_env

And finally install CADET-Process:

pip install .

Thank you again Jo for taking the time.

I was able to install it properly following the instructions. I think the issue was that I was using git through python and I guess I wasn’t using the commands properly.

Now that I was able to run CADETProcess, I have encountered a new error output on running the comparator. Please see below:

---------------------------------------------------------------------------

AttributeError Traceback (most recent call last)

Input In [5], in <cell line: 7>()

5 metrics = comparator.evaluate(simulation_results)

6 print(metrics)

----> 7 _ = comparator.plot_comparison(simulation_results)

8 optimization_problem = OptimizationProblem('MSSpCC')

9 optimization_problem.add_evaluation_object(process)

File ~\Anaconda3\envs\CADETProcessTest\lib\site-packages\CADETProcess\comparison\comparator.py:173, in Comparator.plot_comparison(self, simulation_results, axs, figs, plot_directory, file_name, show, plot_individual)

167 def plot_comparison(

168 self, simulation_results, axs=None, figs=None,

169 plot_directory=None, file_name=None,

170 show=True, plot_individual=False):

172 if axs is None:

--> 173 figs, axs = self.setup_comparison_figure(plot_individual)

174 if not isinstance(figs, list):

175 figs = [figs]

File ~\Anaconda3\envs\CADETProcessTest\lib\site-packages\CADETProcess\comparison\comparator.py:160, in Comparator.setup_comparison_figure(self, plot_individual)

156 comparison_axs_ind.append(axs)

157 plt.close(fig)

159 comparison_axs_ind = \

--> 160 np.array(comparison_axs_ind).reshape(comparison_axs_all.shape)

162 if plot_individual:

163 return comparison_fig_ind, comparison_axs_ind

AttributeError: 'AxesSubplot' object has no attribute 'shape'

Thank you.

Hey,

can you please make sure that you have the latest version of the dev branch? I think this issue was already fixed.

Since I occasionally force-push to dev, I suggest that you run the following command to avoid conflicts.

Warning: This will overwrite all local changes you’ve made in the repo. So use with care!

git fetch && git reset origin/dev --hard

For future releases I will try to work more with feature branches but recently too many things have been evolving simultaneously which made it hard for me to keep everything in sync.

Also, future releases will be much more stable than the last ones. I’m constantly adding more tests and the feedback of all of you early users really helps me a lot with that. So thanks for you patience and for testing the software. If there are any questions/issues, I’m always very happy to help, also in a short call or so.

Cheers,

Jo

Hello again.

Thanks again to you and Luka for taking the time to chat this morning.

As we discussed, please find attached my utilities notebook that I reference in the script attached to my first post.

cputils_devbranch.ipynb (5.3 KB)

Best,

-Yemi

Hey Yemi,

first of all sorry for the late reply. We looked at your script and updated it with some features that are currently not in the documentation of CADET-Process. The script can be found below the post.

Your parameter estimation runs on my system perfectly with the current changes in the dev Branch of CADET-Process. So please make sure you have the latest version of the dev branch.

In the following, I want to point out the major changes in the script:

-

The first part of the script is used to import all parts of CADET-Process which are needed for the simulation. There you used a utils file to do so. I would suggest making the script as readable as possible and using the object-oriented approach of CADET-Process in each script so that you just import the parts which are needed for modeling your process.

-

In your optimization problem you added the objective but did not specify the number of objectives (n_objectives) which is one (1) by default. But since you used “Shape” as a metric without the derivative, the number of objectives are three (3). An easy way to specify it is by using the comparators attribute n_metrics. The code would look like this:

optimization_problem.add_objective(comparator,n_objectives=comparator.n_metrics, requires=[process_simulator])

- A callback function was added which can be specified by the user. In this case, the function is used to plot the comparison between your reference and the simulation. This helps you visualize the progress of your parameter estimation while the optimization is running. The code for the callback looks like this:

def callback(

simulation_results, individual, evaluation_object, callbacks_dir):

comparator.plot_comparison(

simulation_results,

file_name=f'{callbacks_dir}/{individual.id}_{evaluation_object}_comparison.png',

show=False

)

optimization_problem.add_callback(

callback, requires=[process_simulator], frequency= 1, keep_progress=True

)

I hope this helps you. If you need further help, please feel free to reach out.

Best,

Lukas

Code_Yemi.ipynb (6.0 KB)

Thank you very much Lukas.

I was also able to run the simulation and the optimization problem with no problems on the recent dev branch.

I am now going to change up some parameters to see if I can improve the parameter estimation. BTW, I have a question regarding that: I am not quite sure how to see the parameter estimates after the optimization is completed. I have looked through the documentation and perhaps I’m missing something. I can’t find the commands for it and I don’t particularly see it in the results folder.

Second, I know with CADET-Match, I am able to perform parameter estimates with multiple experiments, how do I go about setting up a parameter estimate with multiple experiment traces? In my mind, I’m thinking I have to import each experiment and set them up separately as individual references for the same simulation?

I thank you again for all your assistance.

Hey Yemi,

I’m very happy to hear that and thanks again for your patience! ![]()

There should be a results.csv file in your working directory which contains the latest values. How does your results directory look like? Did you set a working_directory?

I’m also currently in the process of overhauling the documentation of the optimization module. I feel there needs to be a more general introduction into the general concepts before diving deeper into parameter estimation/process optimization scenarios.

Yes, that’s the idea. You can add multiple references to a single Comparator instance if they are to be compared with the same simulation results. This is useful if you have multiple runs of the same experiment or if you measure the same experiment at different positions of your system (e.g. different sensors).

If you have different experiments (i.e. different concentrations, flow rates, switch times,…), then you also need add separate simulations (i.e. evaluation objects), and correspondingly separate Comparator instances as evaluators/objectives.

Since CADET-Process aims providing more flexibility than CADET-Match, this might still seem a bit clunky. But with time we will add more and more convenience methods that will facilitate the setup of these scenarios. Currently, we focus on making the foundation more solid. As always, we’re happy to assist with the implementation.

Yes, I did set a working directory. Thanks to Lukas example code ![]() The results dir contains a callbacks folder, figures folder, checkpoint file, results_all CSV and a results_meta CSV.

The results dir contains a callbacks folder, figures folder, checkpoint file, results_all CSV and a results_meta CSV.

However, when I open these files, the numbers aren’t what I expect. For my current runs, I am estimating three parameters and I set the UB and LB for each (particle porosity, column porosity and axial dispersion), however, the numbers I’m seeing in the CSVs are not really within the bound - besides, I can’t tell which number represents which parameter.

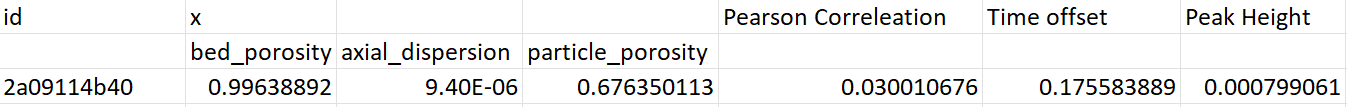

For example, the results_all and results_meta CSV contains columns: id, x, Pearson Correlation, Time Offset, Peak Height and two unnamed columns. The results_all contains many rows of data while the results_meta file contains only 4 rows of data. If, by my thinking, the results_all file shows all the data, then the last row represents the most recent estimates? But then which of the numbers represent which parameter?

I apologize if all this seems rudimentary, I have tried to find some answers in both Cadet and Cadet-Match documentation, but I’m still unsure of how to read the results. ![]()

This would be welcome, indeed ![]()

Excellent. That was what I thought. Thank you for the further explanation and breakdown.

I am very happy to provide feedback regarding my findings and I very much appreciate all the help and guidance you all have provided thus far.

All the best.

-Yemi

Hey Yemi,

First, I am also happy that the parameter estimation is running on your device, and thanks for the feedback.

Regarding the problem with the csv-file. As you mentioned the structure of the columns are id, x, Pearson Correlation, Time Offset, Peak Height. For your example, the x has three values for the bed porosity, the axial dispersion, and the particle porosity. So instead of one x row, there are three rows in the csv file for your variables. But the header was not shifted respectively. This means that the last three lines are related to Pearson Correlation, Time Offset, Peak Height and the three lines after the id are your variables. Maybe this picture helps:

In the script, I send you I added the transform='auto' in this line of code:

optimization_problem.add_variable('flow_sheet.column.particle_porosity', lb=0, ub=1, name='particle_porsosity', transform='auto')

The axial dispersion has different order of magnitude so the code will transform this variable between 0 and 1. If you just leave out the transform='auto' for now you will recognize that that the second row of x will be in the bound you set. I think Johannes will update that soon so that you can transform your variables and see the untransformed value in the csv file.

I hope this help.

Have a nice day and please feel free to reach out ![]()

Best,

Lukas

Hey,

I updated the save_csv method. It now correctly adds the columns with the parameter names, not just ‘x’.

Yes, again, my bad. ![]() As Lukas pointed out, CADET-Process internally automatically performs a parameter transform to make life easier for the optimizer. Obviously, it should return the untransformed values for the results. This should now also be fixed.

As Lukas pointed out, CADET-Process internally automatically performs a parameter transform to make life easier for the optimizer. Obviously, it should return the untransformed values for the results. This should now also be fixed.

It is! Thank you. The current results section has the proper heading and the non-transformed values. ![]()

I have another follow for @l.thiel, if you don’t mind. So I have two sets of replicated experiments that I am using to estimate parameters. The conditions are the same except for the flow rates - so two replicates at one flowrate and two replicates at another.

By my understanding, I load all four datasets, the data with the same flowrates are setup as multiple references for the same simulation (so I have two different simulations at each flow rate).

Each of these references are set up as their own evaluation objects, corresponding to separate comparators.

The portion of the code I’m not quite sure off is in the callbacks. Please see below. Is my implementation proper?

# Set Parameters

inlet_conc = 1000

feed_flowrate_75 = 0.48 # ml/min

feed_flowrate_300 = 1.92 # ml/min

vol_flowrate_75 = feed_flowrate_75*((1e-6)/60) # m^3 s-1, Convert feed flow rate units

vol_flowrate_300 = feed_flowrate_300*((1e-6)/60) # m^3 s-1, Convert feed flow rate units

col_length = 2.5 # cm, column length

col_ID = 0.7 # cm, column inner diameter

col_csa = np.pi*(col_ID/100)**2 # m^2, column cross sectional area

particle_diameter = 5e-5 # m

sim_duration_75 = time_75[-1] # s, Equivalent to the load time

sim_duration_300 = time_300[-1] # s, Equivalent to the load time

#### CADET-Process

component_system = ComponentSystem(1) # One component, i.e. target protein or material

binding_model = NoBinding(component_system, name='nobinding')

feed_unit = Source(component_system, name='feed')

feed_unit.c = [inlet_conc]

column = LumpedRateModelWithPores(component_system,name='column')

column.length = col_length/100 # m

column.diameter = col_ID/100 # m

column.axial_dispersion = 1e-7

column.bed_porosity = 0.4 # -

column.particle_porosity = 0.4 # -

column.particle_radius = particle_diameter/2 # m

column.film_diffusion = [0] #

column.binding_model = binding_model # Attach the configured binding model to the column

outlet = Sink(component_system, name='outlet')

flow_sheet = FlowSheet(component_system)

flow_sheet.add_unit(feed_unit)

flow_sheet.add_unit(column)

flow_sheet.add_unit(outlet)

flow_sheet.add_connection(feed_unit, column)

flow_sheet.add_connection(column, outlet)

process_75 = Process(flow_sheet, 'process_75')

process_75.cycle_time = sim_duration_75

process_75.add_event('feed_on', 'flow_sheet.feed.flow_rate', vol_flowrate_75, 0)

process_300 = Process(flow_sheet, 'process_300')

process_300.cycle_time = sim_duration_300

process_300.add_event('feed_on', 'flow_sheet.feed.flow_rate', vol_flowrate_300, 0)

process_simulator = Cadet()

simulation_results_75 = process_simulator.simulate(process_75)

_ = simulation_results_75.solution.column.outlet.plot()

simulation_results_300 = process_simulator.simulate(process_300)

_ = simulation_results_300.solution.column.outlet.plot()

# Reference

# Each replicate is given its own reference name

reference_75_1 = ReferenceIO('Exp_75_3', time_75_3, concentration_75_3)

reference_75_2 = ReferenceIO('Exp_75_4', time_75_4, concentration_75_4)

reference_300_1 = ReferenceIO('Exp_300_1', time_300_1, concentration_300_1)

reference_300_2 = ReferenceIO('Exp_300_2', time_300_2, concentration_300_2)

#Comparator

comparator_75 = Comparator()

comparator_75.add_reference(reference_75_1)

comparator_75.add_reference(reference_75_2)

comparator_75.add_difference_metric('Shape', reference_75_1, 'column.outlet', use_derivative=False)

comparator_75.add_difference_metric('Shape', reference_75_2, 'column.outlet', use_derivative=False)

metrics_75 = comparator_75.evaluate(simulation_results_75)

comparator_300 = Comparator()

comparator_300.add_reference(reference_300_1)

comparator_300.add_reference(reference_300_2)

comparator_300.add_difference_metric('Shape', reference_300_1, 'column.outlet', use_derivative=False)

comparator_300.add_difference_metric('Shape', reference_300_2, 'column.outlet', use_derivative=False)

metrics_300 = comparator_300.evaluate(simulation_results_300)

#Optimzation Problem

optimization_problem = OptimizationProblem('MSSpCC')

optimization_problem.add_evaluation_object(process_75)

optimization_problem.add_evaluation_object(process_300)

optimization_problem.add_variable('flow_sheet.column.bed_porosity', lb=0, ub=1, name='bed_poros', transform='auto')

optimization_problem.add_variable('flow_sheet.column.axial_dispersion', lb=1e-8, ub=1e-5, name='axial_dispersion', transform='auto')

optimization_problem.add_variable('flow_sheet.column.particle_porosity', lb=0, ub=1, name='particle_porosity', transform='auto')

optimization_problem.add_evaluator(process_simulator)

optimization_problem.add_objective(comparator_75,n_objectives=comparator_75.n_metrics, requires=[process_simulator])

optimization_problem.add_objective(comparator_300,n_objectives=comparator_300.n_metrics, requires=[process_simulator])

settings.working_directory = 'C:/Users/~/Desktop/Results_Parameter_est'

def callback(

simulation_results, individual, evaluation_object, callbacks_dir='./'):

comparator_75.plot_comparison(

simulation_results_75,

file_name=f'{callbacks_dir}/{individual.id}_{evaluation_object}_comparison.png',

show=False

)

comparator_300.plot_comparison(

simulation_results_300,

file_name=f'{callbacks_dir}/{individual.id}_{evaluation_object}_comparison.png',

show=False

)

optimization_problem.add_callback(

callback, requires=[process_simulator], frequency= 1, keep_progress=True

)

optimizer = U_NSGA3()

optimizer.progress_frequency = 1

optimizer.pop_size = 10

optimizer.n_max_gen = 5

optimizer.n_cores = 1

if __name__ == '__main__':

print('Starting optimization')

optimization_results = optimizer.optimize(

optimization_problem,

use_checkpoint=True

)

Thanks again to you both!